Wait… this is exactly the problem a video codec solves. Scoot and give me some sample data!

Wait… this is exactly the problem a video codec solves. Scoot and give me some sample data!

I was not talking about classification. What I was talking about was a simple probe at how well a collage of similar images compares in compressed size to the images individually. The hypothesis is that a compression codec would compress images with similar colordistribution in a spritesheet better than if it encode each image individually. I don’t know, the savings might be neglible, but I’d assume that there was something to gain at least for some compression codecs. I doubt doing deduplication post compression has much to gain.

I think you’re overthinking the classification task. These images are very similar and I think comparing the color distribution would be adequate. It would of course be interesting to compare the different methods :)

The first thing I would do writing such a paper would be to test current compression algorithms by create a collage of the similar images and see how that compares to the size of the indiviual images.

Desktop Applications

Unless someone has registered the trademark for those specific purposes you’re clear. A trademarks is only valid within a specific field of purpose. Trademarks are there to avoid consumers mistaking one brand for another.

There are a lot of entertaining articles on Techdirt about companies not understanding trademark law.

No, it’s just a service that’s running without me thinking about it.

My setup is:

But I’d like to make a point that’s not being made in any of the other comments. It does not require an SMTP server to send e-mail. All you have to do is lookup the MX DNS record of the domain, connect to that SMTP server and write a few commands fx.:

EHLO senderdomain.tld

MAIL FROM:<yourmail@yourdomain.tld>

RCPT TO:<recipient@recipientdomain.tld>

DATA

Subject: Blabla

Bla bla

.

What’s the font? It looks goooood.

WARNING. Everything other than the last paragraph is kind of rude and opinionated, so skip to the bottom if you only want practical advice and not a philosophical rant.

First of all Free Software don’t need paid developers. We scruffy hackers create software because it’s fun. I have a strong suspicion that the commercialization of Free Software via the businessfriendly clothing “Open Source” is actually creating a lot of shitty software or at least a lot of good software that’ll be obsoleted to keep business going. Capitalization of Free Software doesn’t have an incentive to create good finished software, quite the opposite. The best open source software from commercial entities is in my opinion those that were open sourced when a product was no longer profitable as a proprietary business. As examples I love the ID software game engines and Blender. Others seem happy that Sun dumped the source code of Star Office, which then became OpenOffice and LibreOffice, but then again companies like Collabora are trying to turn it into a shitty webification instead of implementing real collaborative features into the software like what AbiWord has.

…and back in the real world where you need to buy food. Open Source consultancy, implementation of custom out-of-tree features, support, courses and training, EOL maintainance or products that leaverage Open Source software is my best answer. See Free Software as a commons we all contribute to, so that we can do things with it and built things from it. You should not expect people to pay for Free Software, but you can sell things that take advantage of Free Software as a resource.

Does anyone know of a list of TLDs that don’t allow reselling? I’d prefer to buy/lease one of those and let domain sharks play their own games.

I use gitit and it’s already packaged in most Linux distros.

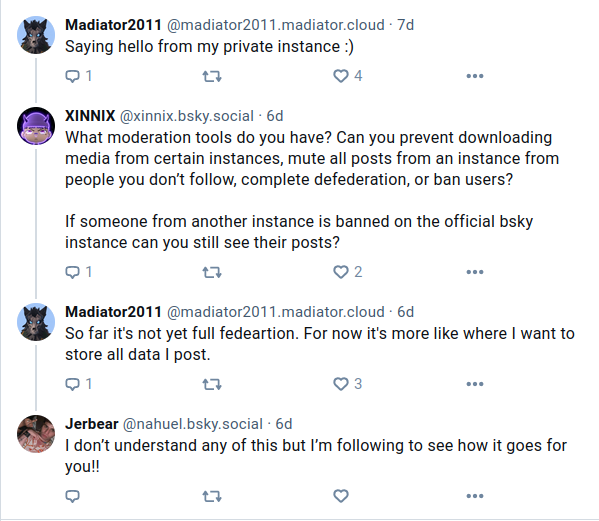

TLDR; Sorta federation. It is possible to selfhost data.

Why not NewPipe?

Yeah, that container probably crashed because of atmospheric disturbance.

I use Devuan and it’s just Debian without systemd.

Because RAM is so cheap, right?

No. Never. It’s a ruse.

Gothub is looking for a new maintainer.

It seems that we focus our interest in two different parts of the problem.

Finding the most optimal way to classify which images are best compressed in bulk is an interesting problem in itself. In this particular problem the person asking it had already picked out similar images by hand and they can be identified by their timestamp for optimizing a comparison of similarity. What I wanted to find out was how well the similar images can be compressed with various methods and codecs with minimal loss of quality. My goal was not to use it as a method to classify the images. It was simply to examine how well the compression stage would work with various methods.